VizCrit: Exploring Strategies for Displaying Computational Feedback in a Visual Design Tool

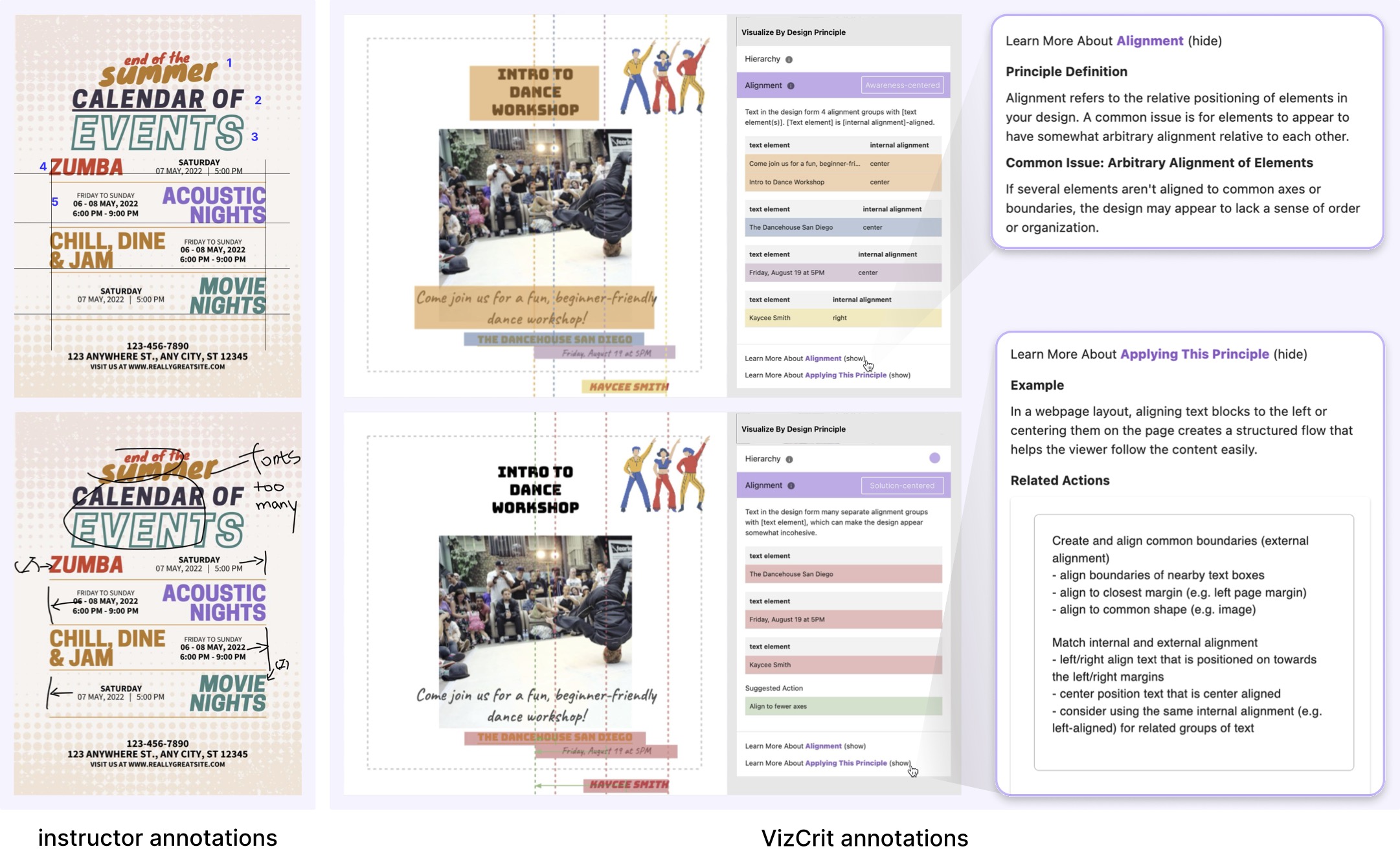

Figure 1. We introduce VizCrit, a design feedback tool that offers feedback with three levels of actionability: textbook-based

feedback as static text, and awareness-centered and solution-centered feedback as adaptive visual annotations. To design the

interactive annotations, we observed how expert designers and instructors provide situated feedback (left), then collaboratively

co-designed a set of visual annotations (right) for four core design principles (Alignment is shown here). For each annotation,

we designed algorithms for heuristically computing the annotations to be displayed as overlays on the visual design (including

issue detection for solution-centered feedback). The user evaluation with novices explores how different actionability in

feedback influences novices' process-related behaviors, learning of principles, perceptions of creativity, and overall outcomes.

Figure 1. We introduce VizCrit, a design feedback tool that offers feedback with three levels of actionability: textbook-based

feedback as static text, and awareness-centered and solution-centered feedback as adaptive visual annotations. To design the

interactive annotations, we observed how expert designers and instructors provide situated feedback (left), then collaboratively

co-designed a set of visual annotations (right) for four core design principles (Alignment is shown here). For each annotation,

we designed algorithms for heuristically computing the annotations to be displayed as overlays on the visual design (including

issue detection for solution-centered feedback). The user evaluation with novices explores how different actionability in

feedback influences novices' process-related behaviors, learning of principles, perceptions of creativity, and overall outcomes.

Abstract

Visual design instructors often provide multi-modal feedback, mixing annotations with text. Prior theory emphasizes the importance of actionable feedback, where “actionability” lies on a spectrum—from surfacing relevant concepts to suggesting concrete fixes. How might creativity tools implement annotations that support such feedback, and how does the actionability of feedback impact novices' process-related behaviors, perceptions of creativity, learning of design principles, and overall outcomes? We introduce VizCrit, a system for providing computational feedback that supports the actionability spectrum, realized through algorithmic issue detection and visual annotation generation. In a between-subjects study (N=36), novices revised a design under one of three conditions: textbook-based, awareness-centered, or solution-centered feedback. We found that solution-centered feedback led to fewer design issues and higher self-perceived creativity compared with textbook-based feedback, although expert ratings on creativity showed no significant differences. We discuss the implications for AI in Creativity Support Tools, including the potential of calibrating feedback actionability to help novices balance productivity with learning, growth, and developing design awareness.

Supplementary Material Download All | User Study Designs

Materials included here contain co-design study materials, user evaluation materials, and user study designs that include all the participants' designs from the evaluation study.

Code Github

Video YouTube

Bibtex

@inproceedings{li2026vizcrit,

author = {Li, Mingyi and Chen, Mengyi and Luo, Sarah and Cao, Yining and Xia, Haijun and Das, Maitraye and Dow, Steven P. and E, Jane L.},

title = {VizCrit: Exploring Strategies for Displaying Computational Feedback in a Visual Design Tool},

year = {2026},

isbn = {9798400722783},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3772318.3791579},

doi = {10.1145/3772318.3791579},

booktitle = {Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems},

numpages = {23},

location = {Barcelona, Spain},

series = {CHI'26}

}